MCP Apps: We Spent $100B on AI to Bring Back the Iframe

In 2026, Karpathy is teaching the world how transformers work on YouTube. Boris is shipping Claude Code that ships software end-to-end. The model on my laptop can write a working compiler in the time it takes me to read this paragraph. We have collectively poured something like a hundred billion dollars into training runs, GPU farms, and the most powerful coding tools ever assembled.

The frontier of AI just shipped its frontend story.

It's an iframe.

I'm not making this up.

The apps die. The frameworks survive.

Every developer I know has a graveyard. Not of bad apps — useful ones, that solved real problems. The internal tool built in Cordova that nobody could rebuild once the toolchain rotted. The Xamarin app shelved when Microsoft renamed Xamarin for the third time. The Angular 1 dashboard the team started porting to Angular 2 and never finished. The Flutter side project that needed one dependency upgrade nobody ever had time for.

The components inside those apps were always the same. Auth. Storage. A list view, a detail view, a form with five fields and a submit button. We re-implemented them in each stack's idioms — provider here, store there, hook next, signal after — and every re-implementation fused the UI to the stack so tightly that porting later wasn't porting. It was writing the whole app again.

So we didn't port. We abandoned. The framework kept shipping. The team picked the next one. That's been the deal for twenty years.

Then the chat happened.

Attention Iframe Is All You Need

With apologies to Vaswani et al.

What I missed about MCP is that it has two audiences. The model needs structured context to reason — that's the half everyone has been building. The other half is the human staring at the chat, who needs to actually understand what just happened.

There's a quote Karpathy keeps citing that fits here: you can outsource your thinking but you cannot outsource your understanding. A paragraph from the model carries fine into the model's next turn — that's the model's reasoning, and the model is the audience for that. It is not enough for the person who has to look at the answer and decide what to do with it. The chat is where understanding has to happen, and a wall of text is not where understanding happens.

That's what the iframe is for. The structured payload still goes back to the model through the AppBridge so the agent can keep talking. The UI bundle is for the human — the chart they read, the SQL they verify, the chips they click instead of retyping the question. MCP became a UI layer because the chat is where humans do the thinking they can't hand off.

The chat host is doing in 2026 what the browser did in 1996. It already has the user. It already has identity. It already has the model. What it needed was a sandboxed render frame, a clean message-passing protocol, and a security boundary you could actually reason about. Once it had those, every "platform" you used to ship to became a single render target.

So the SEP-1865 spec is exactly that. Your tool tags itself with a ui:// resource URI. The host pulls down the bundle, drops it into a sandboxed iframe, and routes postMessage traffic between the iframe and the model. Your code is HTML. The host is the runtime. Auth, storage, model access — delegated through the spec.

One endpoint. Every chat client renders it the same way.

I built it to make sure I wasn't hallucinating.

The screenshots, in order

An MCP server over the FAA Aircraft Registry, live at chris.towles.dev/mcp/aviation. Same endpoint, two host surfaces, same render.

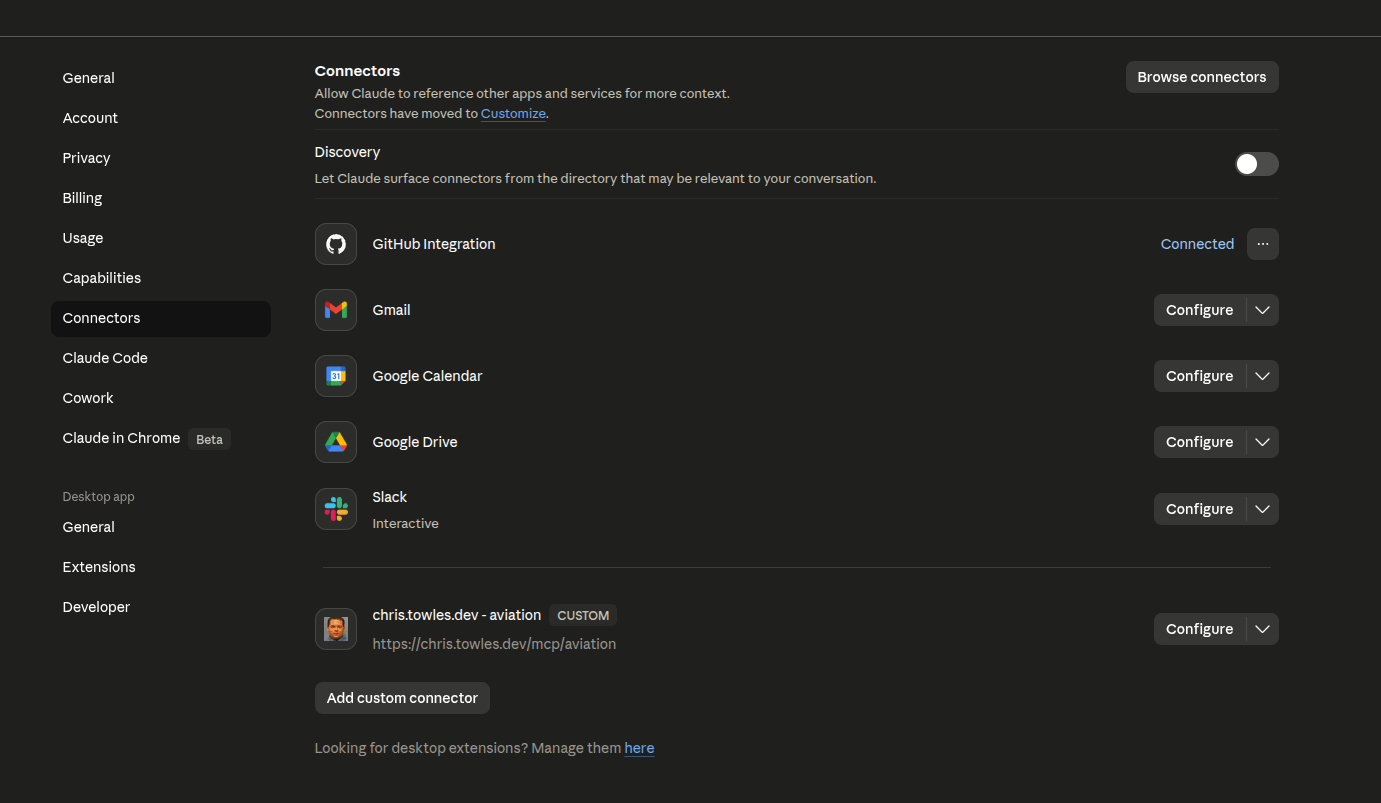

Adding it to Claude Desktop is no different from any other MCP server — Settings → Connectors, custom URL:

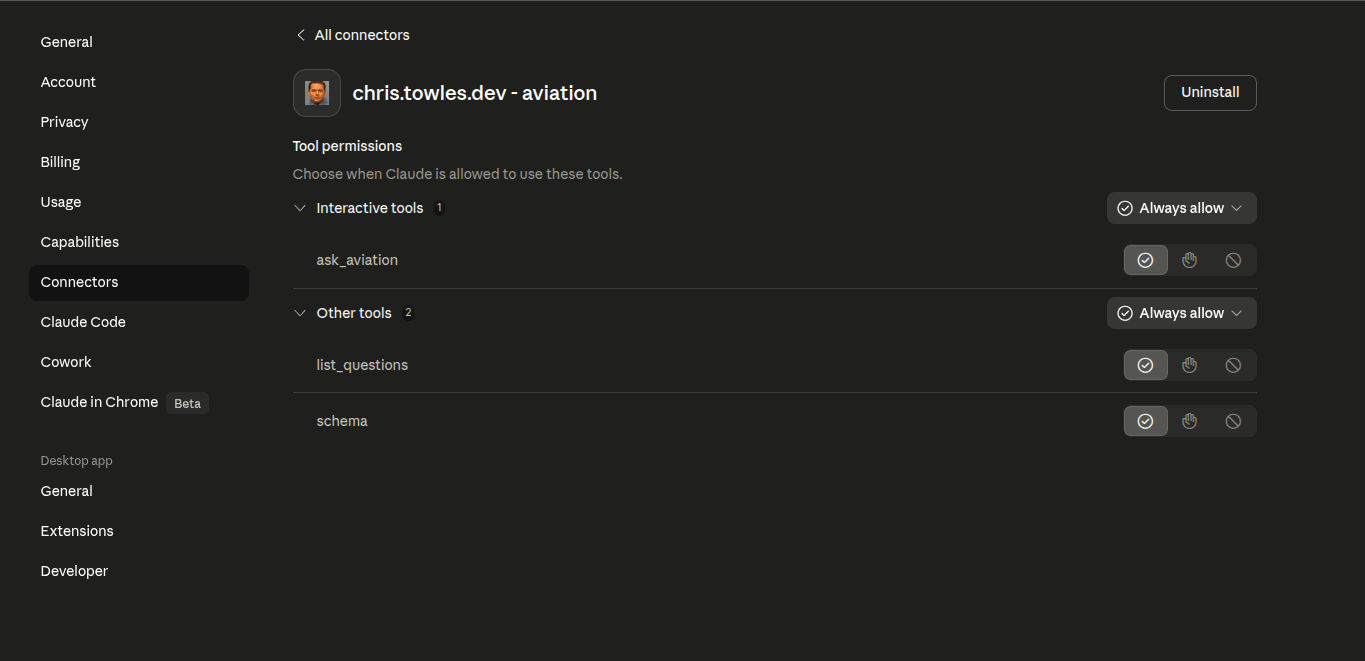

Open the connector and the host shows me something I didn't write a line of code for:

ask_aviation is filed under Interactive tools. The other two are under Other tools. The host is reading the _meta.ui.resourceUri off my registration and labeling tools that ship a UI bundle differently from tools that return JSON. The user gets a different consent prompt for each. I didn't ask for that. The SDK tags it, the host honors it, and the plumbing reaches all the way down.

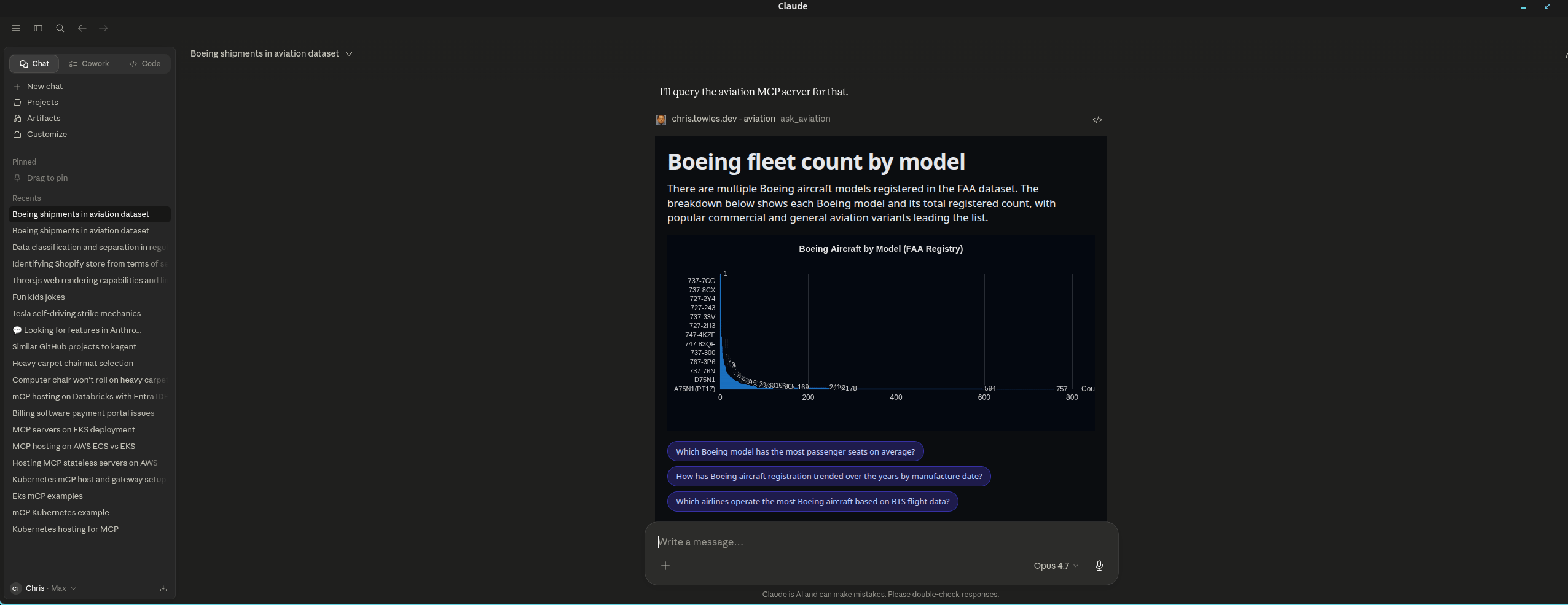

Then the answer:

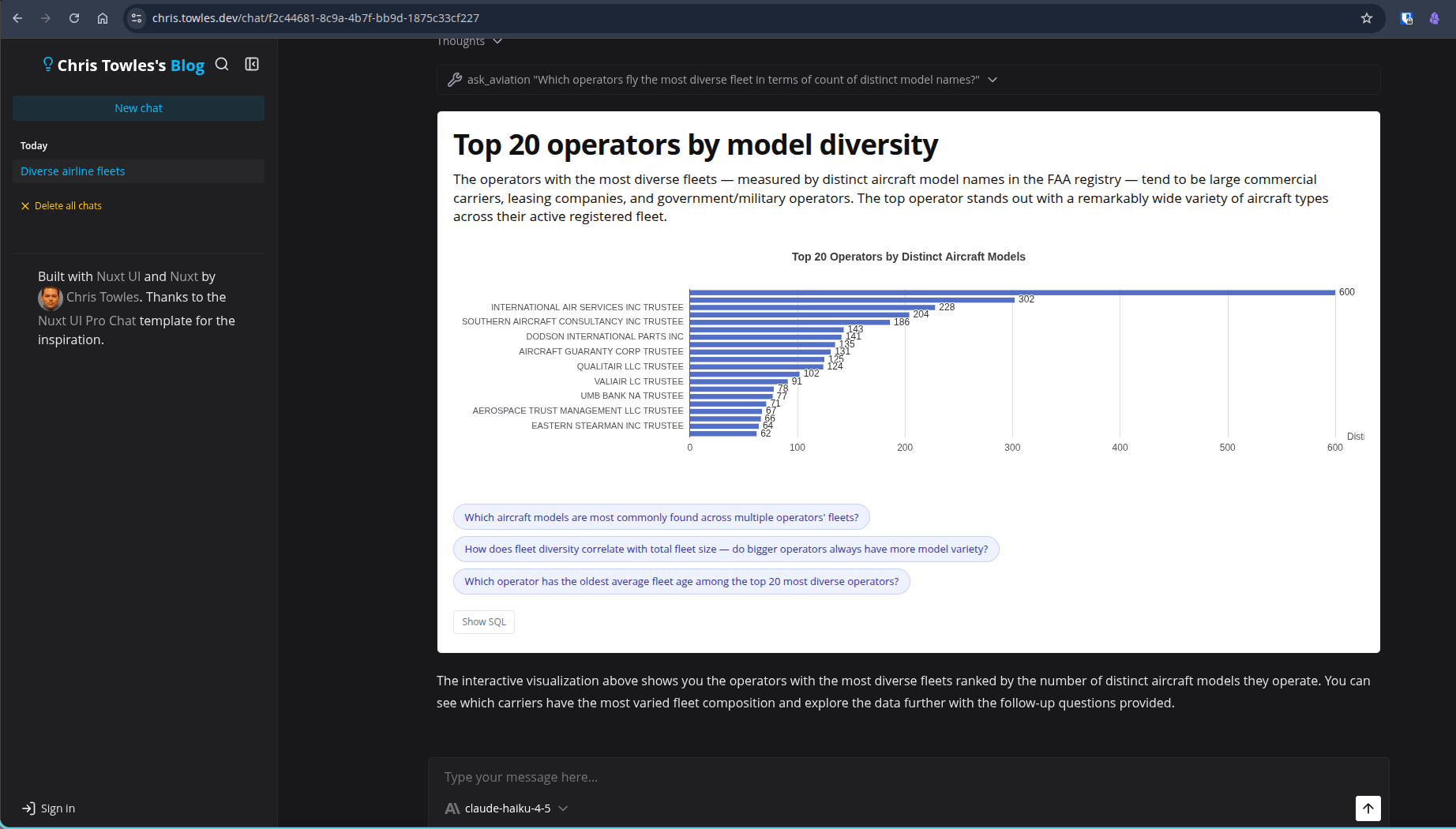

That card is an iframe my server shipped. The chart paints from a structured option object the model emitted. The "show SQL" toggle, the loading state, the follow-up chips — all in my bundle. The chips aren't decoration: clicking one fires ui/message (a spec method), the host adds it to the conversation as the next user turn, and the round trip happens again without the user touching the keyboard.

The same bundle renders in my blog's own chat. Different MCP client, same iframe, same behavior:

That's the bidirectional UI contract working — one server, two MCP clients, identical render. Claude Desktop didn't get a privileged path. The blog's chat acts as its own MCP client and gets the same iframe out the back end.

The parts I had to actually figure out

A handful of things from building this that are worth knowing before you try.

The sandbox has to live on a different origin. SEP-1865 is explicit about this — the host iframe and your bundle MUST come from separate origins, or the security boundary doesn't exist. I tried serving the proxy at /sandbox/... on the blog's own origin first, because it was the cheap path. It's also the broken path. I ended up spinning up sandbox.towles.dev as a separate Cloudflare Pages site whose only job is document.write of the inner iframe with the right CSP headers. Without that subdomain, neither host renders the bundle.

MCP tool calls block the model. This is the single biggest constraint shaping the rest of the design. If ask_aviation takes seven seconds — DuckDB cold start, LLM emitting SQL plus the ECharts option object, query execution — the chat sits frozen for seven seconds. So the tool returns instantly with { pending: true, queryUrl }. The iframe, once mounted, POSTs that URL on its own and reads progress + the final structured payload over SSE. The model gets the result back through the AppBridge once the iframe has it. From the chat's point of view the tool finished in milliseconds and the surface filled in afterward.

DuckDB on GCS authenticates via HMAC, not the service account. DuckDB's gs:// path goes through the S3-compat API, which only accepts HMAC keys. ADC doesn't work — there's no Google-OAuth path through that codebase. Terraform mints an HMAC key for the Cloud Run service account, stores it in Secret Manager, and injects it as an env var. Until I read the DuckDB httpfs source, this looked like a meaningless SignatureDoesNotMatch and a wasted afternoon.

Cold-start latency is real. DuckDB httpfs takes two to five seconds to spin up a fresh GCS connection on a cold container. Stack that on top of an actual query and you've blown past anyone's patience for a chat answer. The fix is a Nitro startup hook that runs INSTALL httpfs and a tiny read_parquet against a one-row file as soon as the container boots. By the time a request arrives, httpfs is warm. Cloud Run's min_instances=1 keeps at least one container alive, so most users never see the cold path at all.

SQL safety is layered, not clever. The model writes the SQL. So the connection is read-only, extension auto-load is disabled, the local filesystem is blocked, an AST allowlist parses every query and rejects anything that isn't a single SELECT/WITH against allowlisted relations, every query gets a LIMIT 10000 injected if it doesn't have one, and there's an AbortController + DuckDB interrupt() timeout. None of those layers is trusted on its own. You can break one. You can't break all of them in a way that hits production data, because there is no production data — the whole bucket is read-only public-domain Parquet.

Replay doesn't refetch. When a chat reloads, the tool result isn't reissued from the server. The iframe re-renders from the static ui:// bundle (cached by the browser) and the structuredContent is replayed from what got saved alongside the message in Postgres. No tool call fires on chat reopen. The bundle is ~400KB gzipped, immutable per deploy; the browser pulls it once per session.

The same /mcp/aviation endpoint serves Claude Desktop and the blog's own chat. Different MCP clients, same tools/call, same CallToolResult, same ui:// resource. That's the part I most wanted to validate. It works.

I built it on a lakehouse, not a database

Most MCP demos read from a transactional database. That's the right shape for a notes app or a calendar tool. It is not how anyone in an enterprise actually answers analytical questions. Aviation is an analytics workload — "which operators have the oldest 737 fleets" is a GROUP BY over millions of rows, joined across two datasets, with predicate pushdown so you don't scan everything every time. Postgres can do it. It just isn't the right shape.

So the demo is built on the same pattern as a real enterprise data stack, scaled down. Parquet files in GCS instead of an Iceberg catalog. DuckDB embedded in the MCP server process instead of Snowflake or BigQuery. httpfs predicate pushdown instead of a query planner shipping work across a cluster. The shape is identical to a production lakehouse. Only the size is different.

OpenAI wrote about their in-house data agent in January — a custom internal tool built around their own data, permissions, and workflows, designed to take employees from question to insight in minutes instead of days. Same architectural shape as my aviation demo, much bigger numbers. The interesting analytical workload looks identical in both places: take a natural-language question, generate SQL, execute against the warehouse, render the answer. The MCP layer doesn't care whether the engine is DuckDB or Trino. The iframe doesn't care. The host doesn't care.

What changes when you scale up is exactly what you'd expect — query engine is bigger, data is partitioned by domain, auth wires into enterprise SSO, audit lands in your existing observability. The contract (tools/call, CallToolResult, ui:// resource) stays.

What the demo is actually querying

Three US-public-domain aviation datasets, joined and stored as Parquet:

- FAA Aircraft Registry — ~300k active US N-numbers with manufacturer, model, year manufactured, operator, home-base airport. The fleet-composition side of the question.

- BTS T-100 Market — twelve months of US flight segment data, ~15M rows after Parquet compression. Carrier, origin, destination, distance, delays. The "what did each fleet actually do" side.

- OpenFlights — global airport, airline, and route reference data. The dimensional layer that makes airport codes and carrier IDs human-readable.

A small curated lookup table bridges BTS carrier codes to FAA operator names, because the two systems don't agree on identifiers and the interesting queries depend on the join working. Known-unmatched carriers stay as nulls; the schema doc enumerates them.

The whole bucket is public-domain or ODbL with attribution preserved in a co-hosted LICENSE.txt. None of it is sensitive. None of it requires negotiating a redistribution agreement. It's the right shape of data for a public demo — real enough to mean something to anyone who works in aerospace, free enough that I can leave it running indefinitely behind the project's $10 GCP spend cap.

If you're at a company with a lakehouse and you've been wondering what to actually build with MCP, this is the pattern. Don't wrap your CRUD APIs. Wrap the warehouse your analysts already use. Ship a chart-shaped answer back into the chat where the question got asked.

All of the above is still a moving target — the spec keeps shipping, and most of these workarounds get smaller every release.

Try it

- /chat on this site — pick an aviation starter question or ask "which operators have the oldest 737 fleets?" The iframe is the same bundle Claude Desktop loads.

- /aviation — copy-pasteable Claude Desktop config in two flavors (native HTTP and

mcp-remotewrapped). About 90 seconds end-to-end.

Endpoint: https://chris.towles.dev/mcp/aviation. Public. Throttled at the edge. No auth.

The boring answer was the right answer

The funny thing about building this is how anticlimactic the payoff is. We are living through the most exotic compute moment in human history. The sequence-to-sequence trick that started this thing cost more than every cross-platform framework I've named in this post, combined. Phones run 70B models. Claude can refactor a Rails monolith between meetings.

And the answer to "how do I ship one UI to every chat" turned out to be: drop an HTML file at an MCP endpoint, let the host iframe it, postMessage when you need to talk back. That's it. There isn't a layer below.

I keep waiting for it to be more complicated than that. It isn't.

I gave up on Xamarin. I gave up on Flutter. I gave up on getting the web to feel right on phones. Sun spent billions on Java applets. Adobe spent a decade on Flash. Facebook bet React Native. Google bet Flutter. Microsoft bet Xamarin twice. None of them shipped the universal client. The chat did, by accident, while we were busy training the model that lives inside it.

For new work I'm writing one HTML bundle and pointing every host I care about at it.

HTML and JavaScript are going to outlive my kids.