The Hardest Part of AI Isn't the AI

Last week I caught myself explaining agent orchestration patterns to a coworker who'd used ChatGPT twice. His eyes glazed over somewhere around "tool calling." I kept going anyway.

That's the moment I realized I'd become the problem.

Since 2024 I've been going deeper into AI every single day. Context windows, KV caches, how models actually work under the hood. I know what tasks to give agents, how skills work, what the latest papers say. I follow the sharpest people in the field and read research directly from Anthropic, OpenAI, and DeepMind.

And none of that matters if I can't meet people where they are. I skip the fundamentals and jump straight to where I am today — talking about agent orchestration when they're still wondering why the chatbot hallucinated their lunch order. I forget that I had to learn all of this one piece at a time, and I try to shortcut that journey for others by just... skipping it.

That's a personality-level failure. And it got me thinking about what actually matters when you're building AI products.

The Thread That Crystallized It

Rahul Sengottuvelu (head of applied AI at Ramp) recently dropped a thread that maps exactly to this problem: the most valuable thing you can build in AI isn't the AI part.

Even Evan You agrees — excited for Void+.

WAGMI stands for "We're All Gonna Make It" — crypto slang I had no idea about before this thread. NGMI is the counterpart: "Not Gonna Make It." In this context, WAGMI strategies build durable value in AI products by focusing on things that evolve slowly, even as frontier models improve at breakneck speed on context windows, reasoning, and token costs.

The core idea: AI model capabilities are commoditizing quickly. The advantages from squeezing marginal gains out of today's models evaporate with the next release. The lasting wins come from building around stable fundamentals.

What's NGMI

Before getting into what works, it's worth naming what doesn't.

NGMI tactics feel productive but become irrelevant as models get smarter and cheaper:

- Custom context window optimizations

- Hybrid retrieval pipeline tweaks

- Specialized fine-tunes for narrow tasks

- Bespoke memory systems and context graphs

- In-house RL models chasing benchmark gains

These all share the same problem: you're competing on the same axis the frontier labs are improving on. Every dollar spent on a clever RAG pipeline is a dollar that GPT-next or Claude-next will make unnecessary. The foundation shifts underneath you.

The WAGMI Playbook

Here's Rahul's thread distilled, with my own experience layered in.

1. Product and UI

The bottleneck is shifting from "can the AI do it?" to "can users easily and enjoyably use it?"

Great UI turns raw capability into sticky, habitual products. Nobody switches away from a tool they love using, even if a competitor has a marginally better model underneath.

I made this mistake at work during the last few months — building a product that does five things well but is confusing to anyone who didn't build it. If users can't figure it out in 30 seconds, the capability is invisible.

2. Customer Acquisition

Even the best AI tech is pointless without users benefiting from it.

This isn't an AI insight — it's the history of technology. VHS beat Betamax. Windows beat OS/2. The technically superior option loses to the one that gets in front of users and keeps them coming back.

3. Deep Integrations

Connect your AI into users' existing tools, data systems, and workflows. Meeting users where they are reduces friction and builds defensibility through network effects and switching costs.

This is why tools like Claude Code, Cursor, and Windsurf have such strong positions. The biggest shift for me with Claude Code wasn't any single feature — it was that it worked on my data, where I already worked. I wasn't copying code in and out of a web browser anymore. The integration IS the product.

4. Agent Tooling

Better linting, CI, skill management, and feedback loops for agents. This is the developer experience layer — not the agent itself, but everything around it that makes agents shippable.

We're at a point where building a working prototype takes less time than scheduling the meeting to get approval for it. The teams that invest in agent DevEx now are the ones that will iterate fastest when the next model drop changes what's possible overnight.

5. Background Agent Infrastructure

Build reliable systems that let agents run asynchronously, in parallel, and at scale. The goal is parallelizing more work without blocking users.

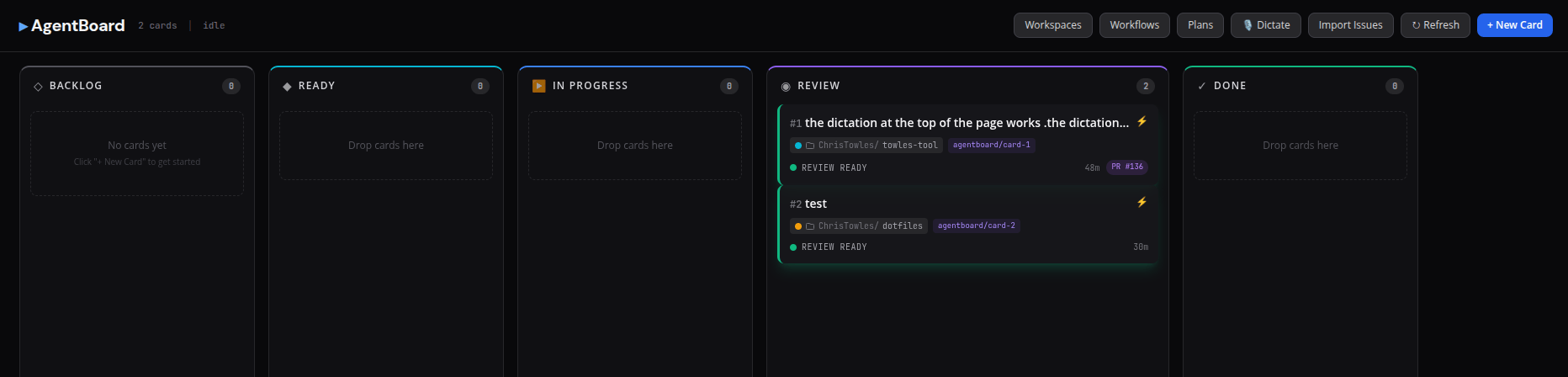

A smarter model doesn't help if you can't run 10 of them concurrently on different tasks with proper slot management, failure recovery, and status tracking. I'm working on this now with towles-tool — an AgentBoard feature that is a kanban board to work with multiple agents at once without having to tab through terminal windows to find which one needs my direction or review!

I'm betting we'll all be running k8s instances before the end of the year for our agent swarms!

6. Agent Verification Loops

Speed up the cycle of testing, validating, and correcting agent behavior in production. The edge comes from how quickly you can observe and improve what agents are doing, not from raw intelligence.

The team that can ship, watch, and correct agent behavior in hours instead of months has a massive advantage. The hard part isn't making the agent smarter — it's knowing when it's wrong.

7. Training Users and Connecting to Their Systems

This is the one I keep failing at. It's also the one that connects back to my opening story.

Claude Desktop is the best example I've seen of making AI approachable, and even that is rough for non-technical people. The gap between "installed the app" and "getting value from it daily" is massive. The more a user invests in learning your tool and connecting their systems, the higher the switching cost — not lock-in through friction, but lock-in through value. Their workflows shape around your product, and that doesn't transfer to a competitor just because they have a newer model.

The gap between "this technology is incredible" and "I can actually use this in my work" is enormous. Bridging it is a human problem, not a model problem. No amount of capability improvement fixes the disconnect between an expert builder and a first-time user.

The Reframe

The frontier labs will keep making models smarter and cheaper. That's great — it makes the WAGMI layer more valuable, not less. Every improvement in model capability increases the surface area of what your product can do, as long as you've built the infrastructure to deliver it.

UI, distribution, integrations, agent infrastructure, user workflows. These are the layers that compound. The model underneath is a commodity — the experience around it isn't.

I'm still working on that last one. Meeting people where they are instead of where I am. It turns out the hardest part of building AI products isn't the AI. It's the people.